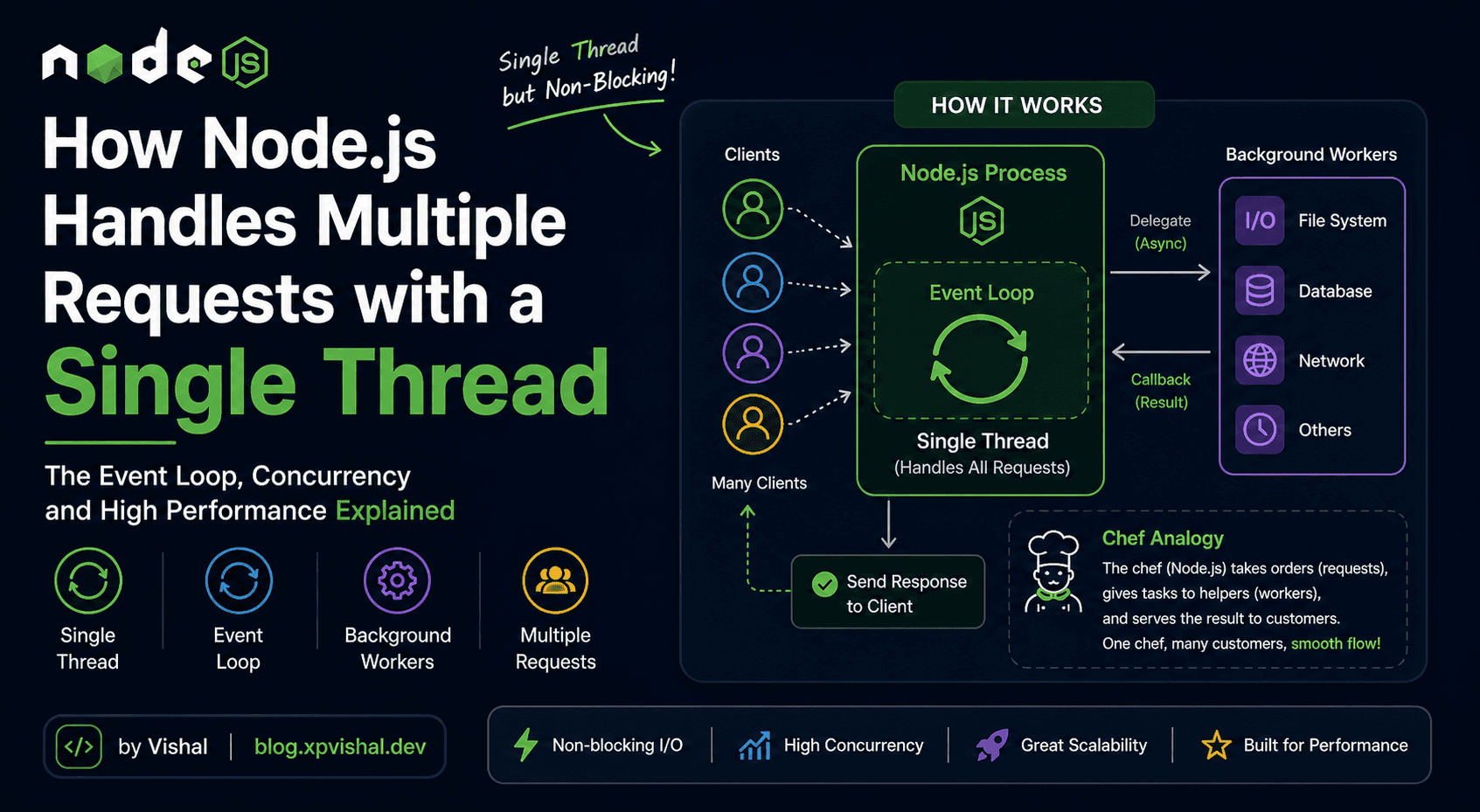

How Node.js Handles Multiple Requests with a Single Thread

Node.js doesn't use multiple threads to handle concurrency — and that's not a weakness. Here's exactly how it pulls off handling thousands of connections without breaking a sweat.

Table of Contents

The Single-Threaded Nature of Node.js

The Event Loop — The Real Engine

Delegating Tasks to Background Workers

Handling Multiple Client Requests

Why Node.js Scales So Well

01 — Foundations

The Single-Threaded Nature of Node.js

Most traditional web servers (like those running Java or PHP) create a new thread for every incoming request. A thread is like a dedicated worker — it picks up a request, works on it from start to finish, and only then moves on to the next one.

This sounds fine until you have 10,000 users hitting your server at once. You'd need 10,000 threads, each consuming memory and CPU just to stay alive. That gets expensive fast.

Node.js takes a completely different approach. It runs your JavaScript on a single thread — one worker — for everything. No matter how many requests come in, there's only ever one thread executing your code.

🔴 Traditional Server (multi-thread)

1 request = 1 new thread

Thread sits idle waiting for DB

1000 requests = 1000 threads

High memory usage per connection

Thread switching overhead adds up

💚 Node.js (single thread)

1000 requests = still 1 thread

Thread never idles — moves on

Tiny memory footprint per connection

No thread switching overhead

Scales to massive concurrency

The question is obvious: if there's only one thread, how does it avoid getting stuck while waiting for a database query or a file read? The answer is the event loop.

02 — The Core Mechanism

The Event Loop — The Real Engine

The event loop is the heart of Node.js. It's what allows a single thread to appear to do many things at once. The key insight is this: Node.js never waits. Whenever it hits a slow operation (reading a file, querying a database, making an API call), it hands that task off to someone else, moves on to the next request, and comes back when the result is ready.

Analogy — The Chef

Imagine a chef in a restaurant. A bad chef would take one order, walk to the kitchen, stand in front of the oven staring at it for 20 minutes while the food cooks, then serve the dish — and only then take the next order.

A good chef takes Order 1, puts it in the oven, immediately takes Order 2, puts it on the grill, takes Order 3, starts the soup — then circles back to check on each dish when it's ready. The chef is always moving. Nothing is waited on.

Node.js is the good chef. Your JavaScript thread is the chef. The oven and grill are background workers (libuv). The event loop is the chef's mental loop of: "what's ready for me to handle next?"

The loop runs in phases, continuously checking: "Is there any callback whose work is done and needs running?" If yes, it runs it. If not, it waits — but only briefly, because new events are always coming in.

03 — Under the Hood

Delegating Tasks to Background Workers

When Node.js hits something slow — like reading a file or querying a database — it does not block the thread and wait. Instead, it hands the task to a library called libuv, which manages a pool of background threads (the thread pool) to do the actual waiting work.

Your JavaScript code just registers a callback: "Hey, when this is done, run this function." Then it immediately moves on.

const fs = require('fs')

// Node does NOT stop here and wait for the file.

// It tells libuv: "read this file, call me when done."

fs.readFile('data.json', 'utf8', (err, data) => {

// This callback only runs when the file is fully read.

// Meanwhile, Node handled dozens of other requests.

console.log('File ready:', data)

})

// This line runs IMMEDIATELY — before the file is even read

console.log('Moved on already!')

// Output:

// Moved on already!

// File ready: { ... }

This is the core of Node's model. The single thread is never sitting around waiting. The moment it can't proceed without waiting, it registers a callback and moves on.

🔑

Concurrency, not parallelismNode.js achieves concurrency — many things in progress at once — not parallelism (many things literally running at the same instant). Your JS code still runs one line at a time, but the waiting happens out of the way, in background workers.

04 — In Practice

Handling Multiple Client Requests

Let's look at a real Express server and trace exactly what happens when three requests arrive at nearly the same time:

const express = require('express')

const app = express()

app.get('/user/:id', async (req, res) => {

// 1. Request arrives — thread picks it up instantly

const id = req.params.id

// 2. Hits the DB — thread DELEGATES this and moves on

const user = await getUserFromDB(id)

// 3. Thread is free for other requests while DB works

// 4. When DB responds, event loop resumes THIS function

res.json(user)

})

app.listen(3000)

Even with 1,000 simultaneous requests hitting /user/:id, the thread is never stuck. While 999 requests are waiting on their individual DB results (handled by libuv workers), the thread is always available to accept new requests, run ready callbacks, or respond to finished work.

⚠️

The one thing that does block NodeCPU-heavy synchronous code — like a loop processing a million items — will block the single thread and freeze your server for everyone. Node is optimised for I/O-heavy work (waiting on databases, files, APIs), not for heavy computation. For CPU tasks, use Worker Threads or offload to a separate service.

The lifecycle of every request in Node.js follows the same pattern:

STEP 1

**Request arrives in the call stack**

The event loop picks it up. The JS thread starts executing the handler function immediately.

STEP 2

**Hits a slow operation (I/O)**

Node hands it to libuv with a callback. The JS thread is instantly free.

STEP 3

**Thread handles other requests**

While the background worker does the slow work, the thread picks up and processes new incoming requests.

STEP 4

**Worker signals completion**

libuv pushes the callback into the event queue. The event loop picks it up on the next tick.

STEP 5

**Callback runs, response sent**

The JS thread resumes the original handler, sends the response to the client. Done.

05 — The Big Picture

Why Node.js Scales So Well

Now the full picture makes sense. Node.js scales because it never wastes the thread on waiting. Waiting is always outsourced. The thread only does real work — running your code, sending responses, handling logic.

Compare a traditional server handling 1,000 requests that each wait 100ms on a database:

// Traditional multi-threaded server

// 1000 requests × 1 thread each = 1000 threads

// Each thread: ~1MB memory overhead

// Total: ~1GB RAM just to keep threads alive while waiting

// Node.js

// 1000 requests × 1 thread = 1 thread

// libuv worker pool: ~4 threads by default (configurable)

// Total: a fraction of the memory, same throughput

This is why Node.js became the go-to choice for real-time apps, chat servers, streaming services, and high-traffic APIs — any workload where the bottleneck is I/O, not raw computation

✅

When Node.js shinesREST APIs, real-time chat (WebSockets), streaming data, microservices, GraphQL servers, and any server that spends most of its time waiting on databases or external APIs. For image processing, video encoding, or heavy math — reach for Worker Threads or a different tool entirely.